For over two and a half years, I worked as a Product Manager at Greator. I was responsible for further developing our online education platform together with two developers.

Greator is the biggest platform for personal development and coaching education in Germany. The company aims to transform people’s lives with various online education products.

The central challenge we faced across our products was to create an effective online education that got our participants the results they wanted, while at the same time making them scalable enough to work equally well with 10 and 10’000 participants.

The Challenge

One specific challenge was the final exam of our premium courses. Every premium course provided participants with the skills to apply coaching to other people in practice. The final exam should test this skill set and make sure that only participants with sufficient practical skills would pass.

In its first iteration, the final exam was a live coaching session with an expert coach. The participant would organize a coachee and complete a coaching session. The expert coach would be present and give feedback at the end. To make this work, everyone needed to be present in the same time slot. This sucked a lot of resources, and with increasing courses and participants, it was a very limited solution.

There were problems with the scheduling itself. Sometimes it was hard for participants to find a time slot with an examiner. In some cases, coachees didn’t show up. This created a lot of frustration on all sides.

So we asked ourselves: How might we create a final exam that ensures the quality of our course alumni but is also cost-efficient and scalable?

Ideation

We’ve looked at solutions from competitors, which included

- Practical live session with the examiner (as we had up to that point)

- Full completion of theoretical course materials (this was one part of our requirements but we wanted a practical examination to ensure quality)

- Final quiz (also part of our requirements but too much focused on theory)

- Final assignment (for example an essay, but this was too far from practical coaching skills)

None of these solutions satisfied our requirements. Instead, we looked for ways to make our current process more automatic and reduce the resources needed per exam.

The two major friction points were the scheduling and the actual execution of the exam. We decided to substitute the live exam with a pre-recorded coaching that is uploaded to the platform by the participant. The expert coach in the role of an examiner could then review the exam asynchronously. This would solve the scheduling problem and make the reviewing process significantly easier. The whole process could be automated, and the only manual step would be the exam review.

User Flow

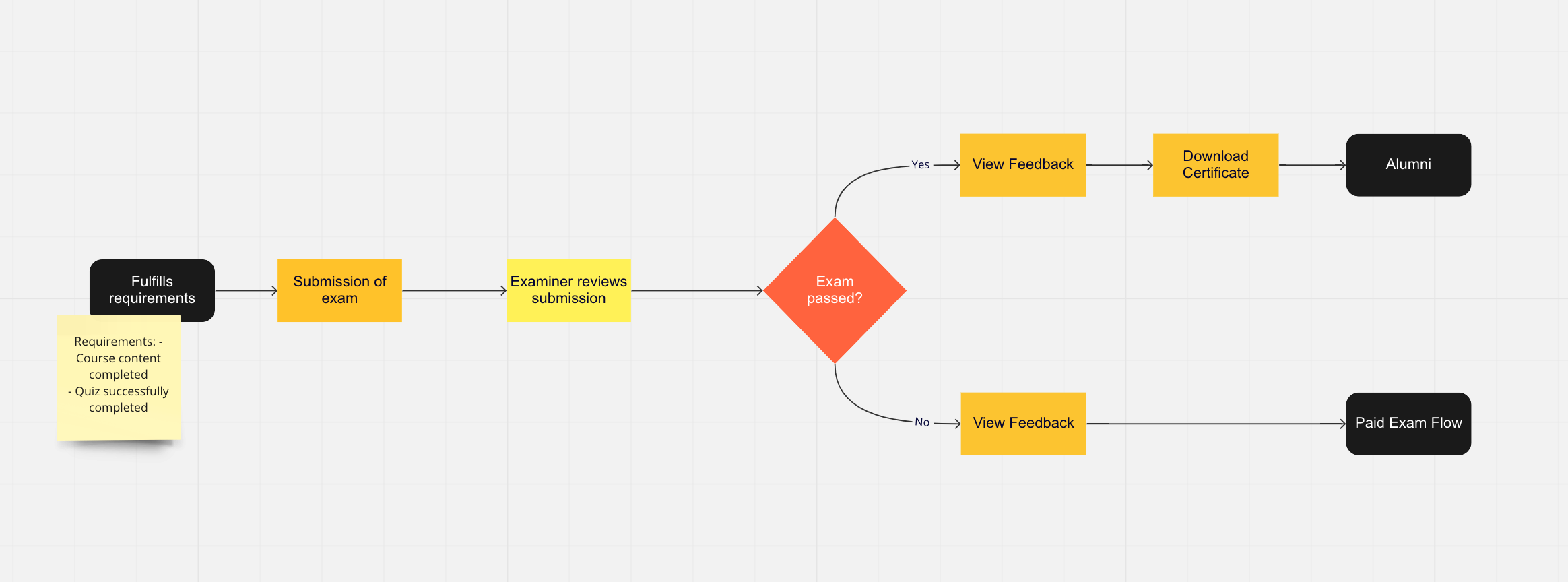

This was the high-level participant (and examiner) journey:

Simple user flow

The exam was only available to participants if they fulfilled the requirements:

- Completed all theoretical content

- Successfully passed the final quiz

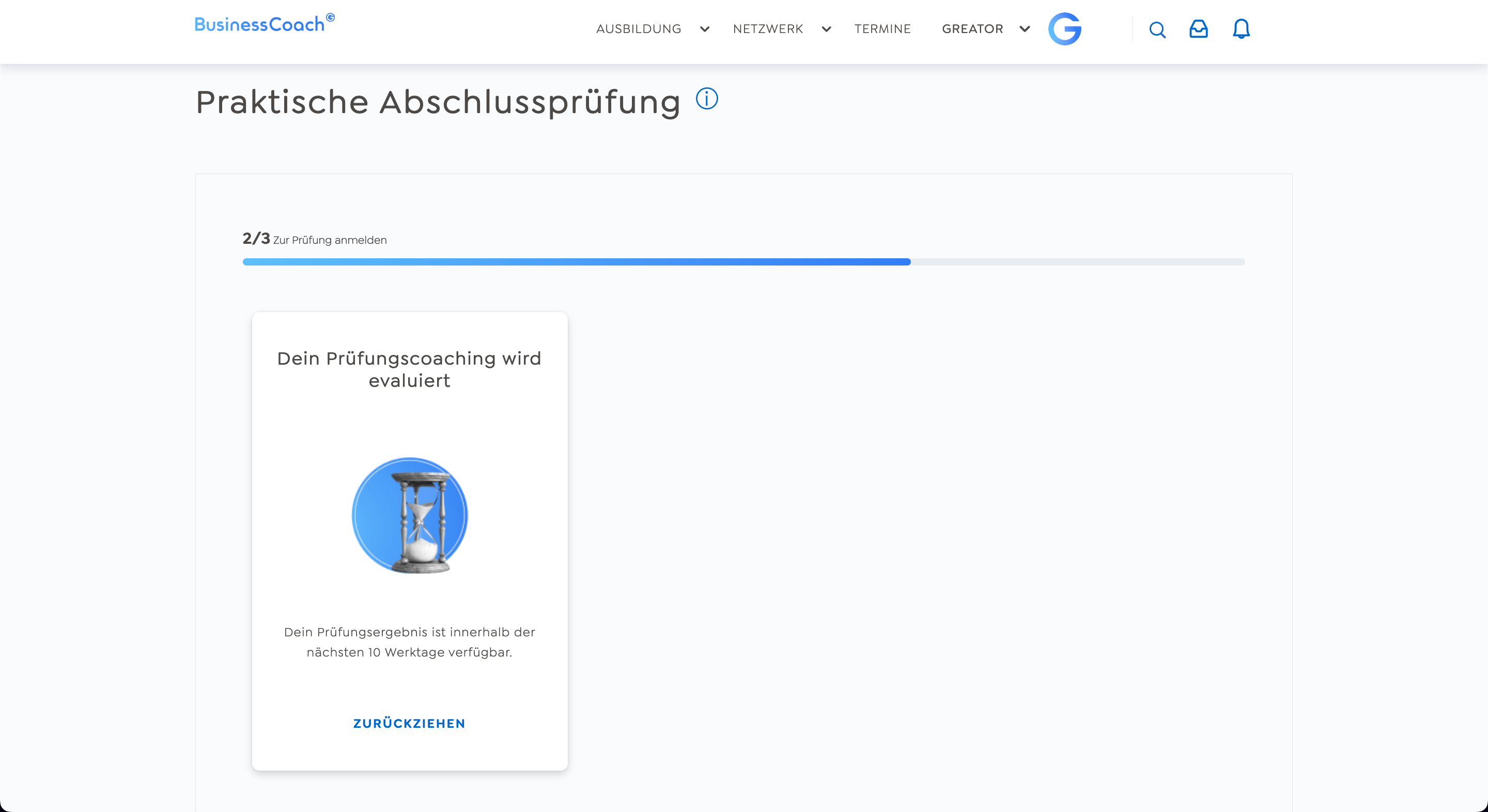

The participant would upload the exam and wait for the examiner’s feedback.

The feedback functionality is standardized and can be done by anyone designated as an examiner.

If the participant passes the exam, they can download a personalized certificate that is automatically generated for them.

If the participant doesn’t pass the exam, they can re-submit as many times as they want. However, only the first exam is free. Every subsequent submission will be charged. For that, the participant provides us with their payment details.

The Solution

Designing the interface was relatively straightforward. We already had high-fidelity components in Figma from previous projects. The fundamental structure of the interface was based on another feature (practice coaching) with which participants were already familiar.

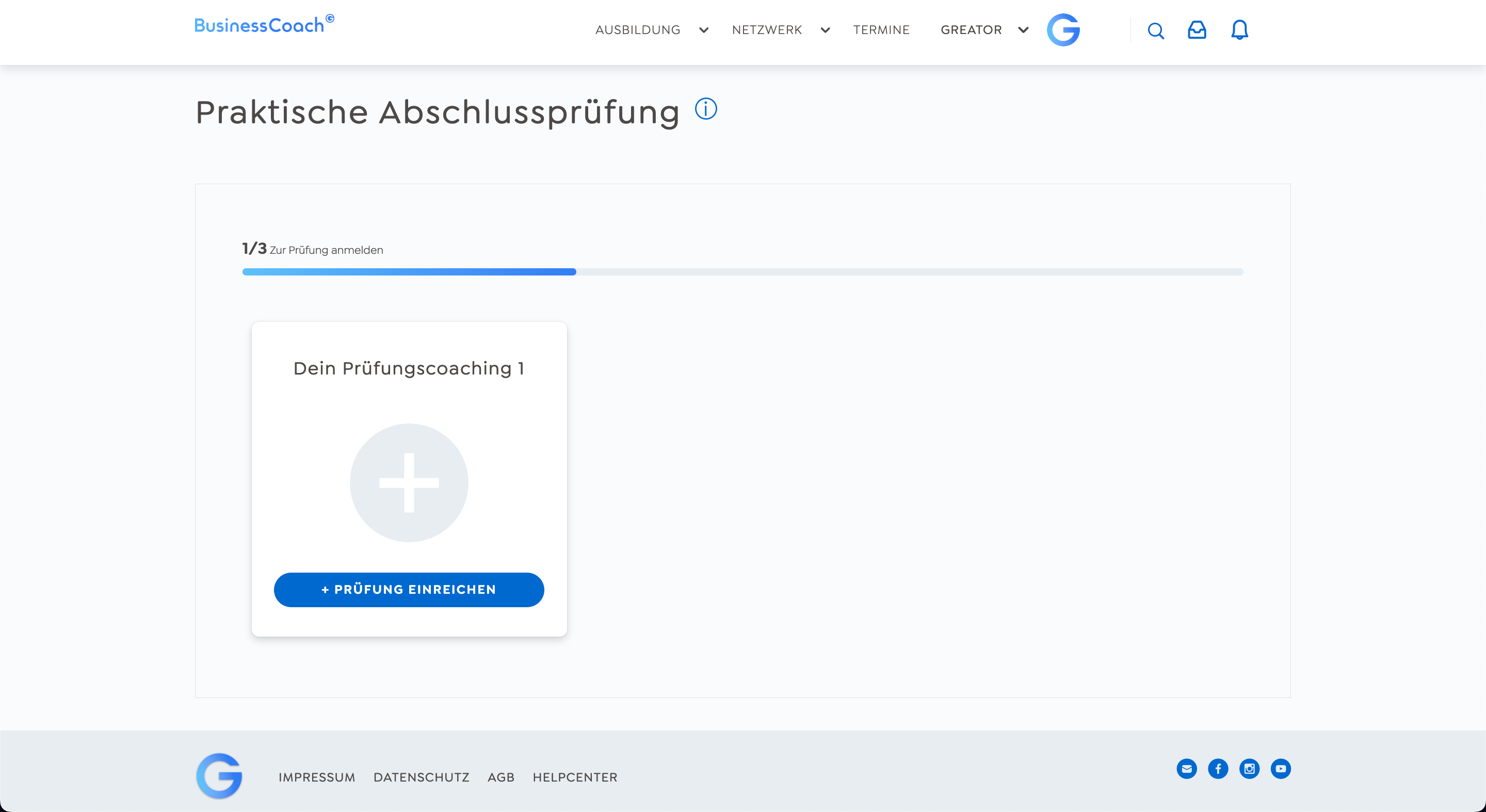

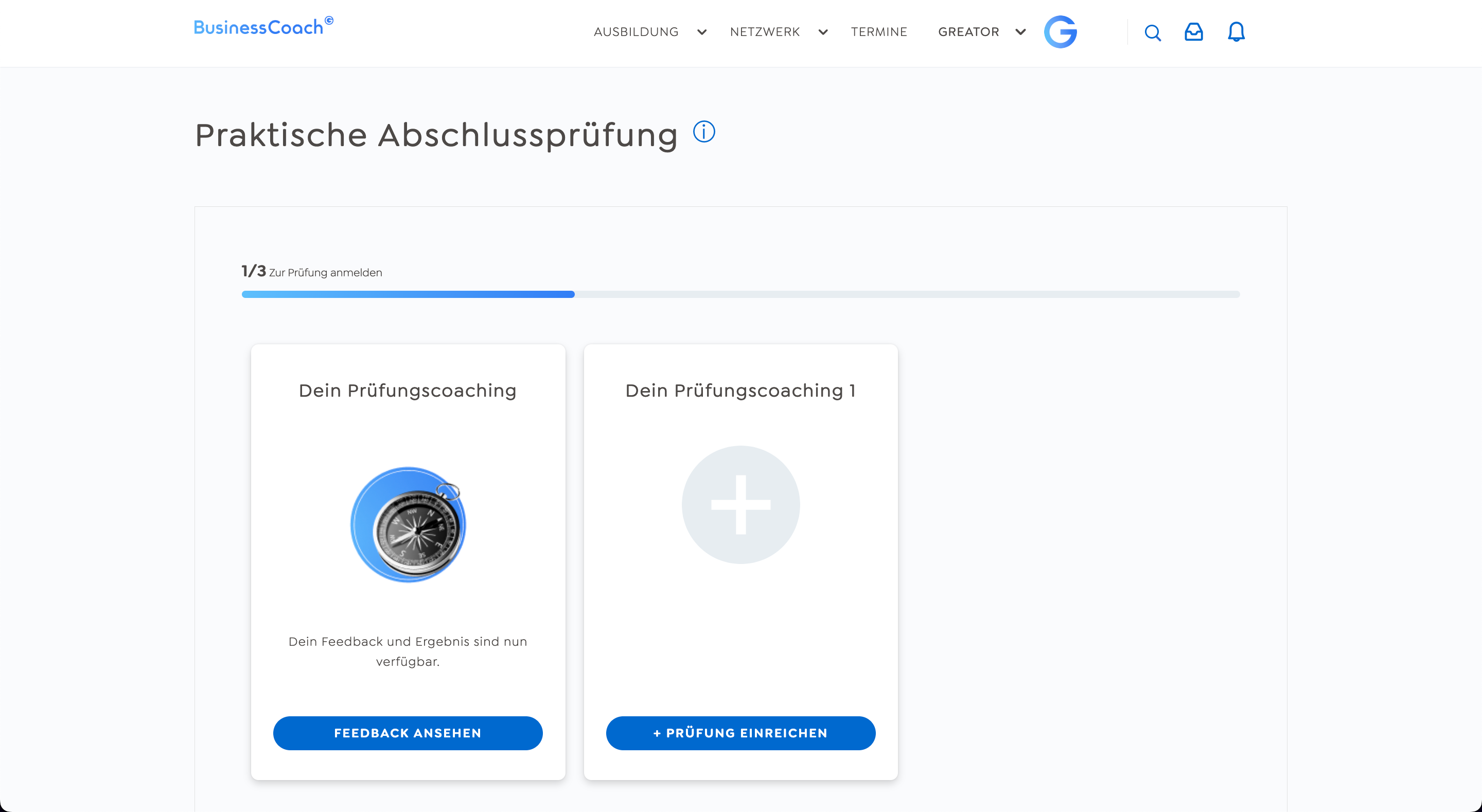

Once a participant fulfilled all the requirements for accessing the final exam (course content and final quiz completed), they’d be notified by a pop-up and e-mail that the exam is now accessible. Over the pop-up they gain access to the final exam page:

Overview page

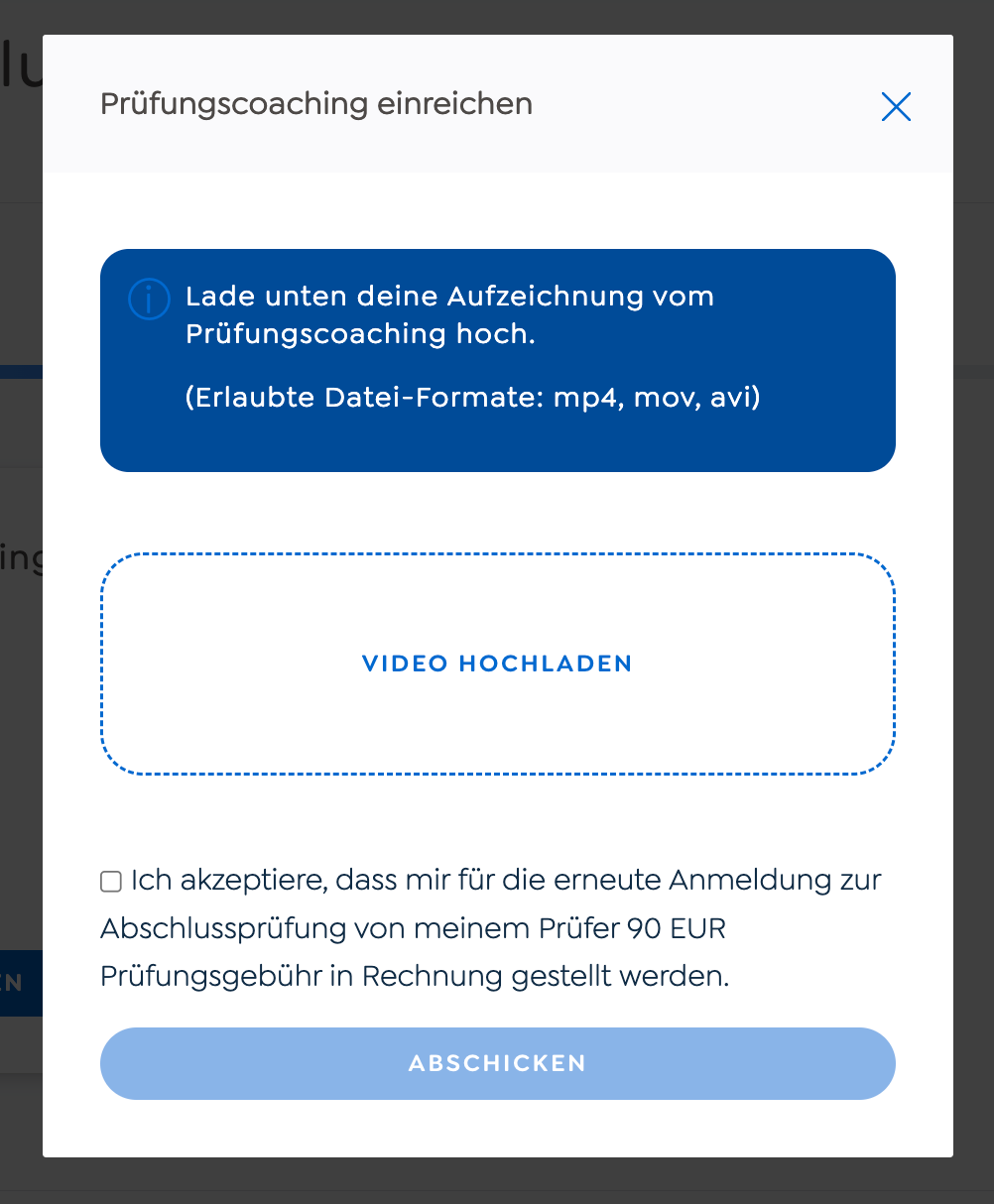

The participant can then upload the examination video. The first exam is free, but every subsequent exam must be paid for by the participant. In this case, there is an additional page where they have to add their payment details.

Exam submission overlay

During the whole journey, the participant knows where in the process they are as indicated on the progress bar and the information on the examination card.

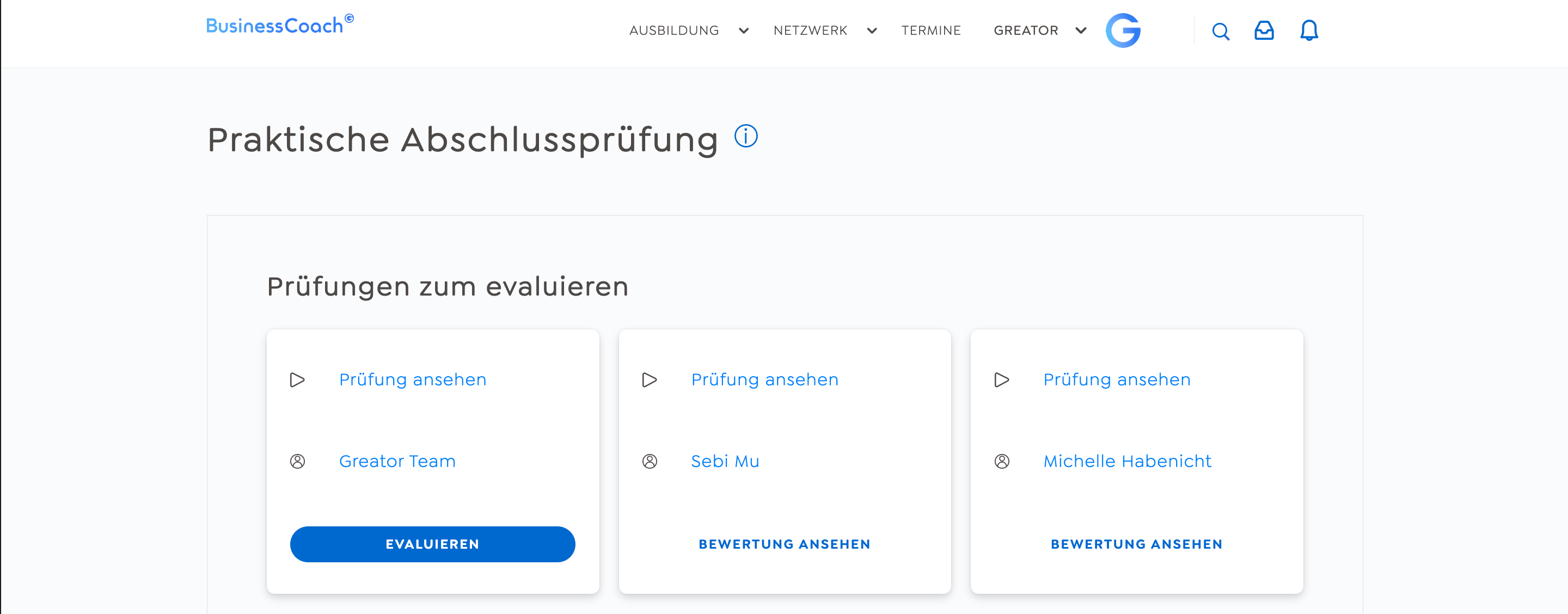

On the other side of the equation, examiners can see all the submissions on their dashboard. They can view the submitted video and give feedback over a standardized feedback form:

Examiner dashboard

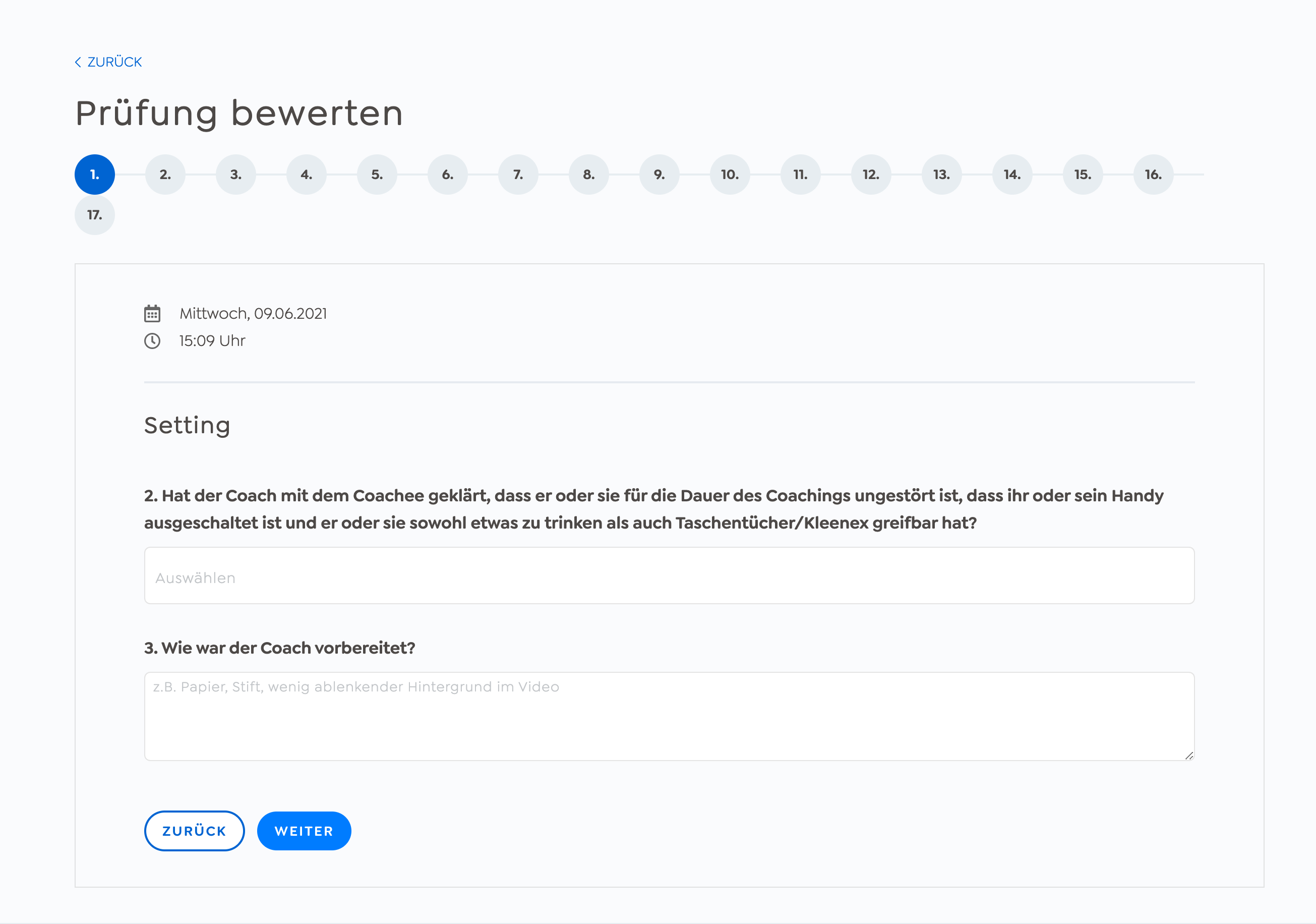

Examiner feedback form

Once the examiner has submitted their feedback, the participant will get notified that their results are here. Based on the feedback, there are two different screens. If the participant didn’t pass the exam, they can view their feedback and submit another, in case they want to try again.

Screen after failed exam

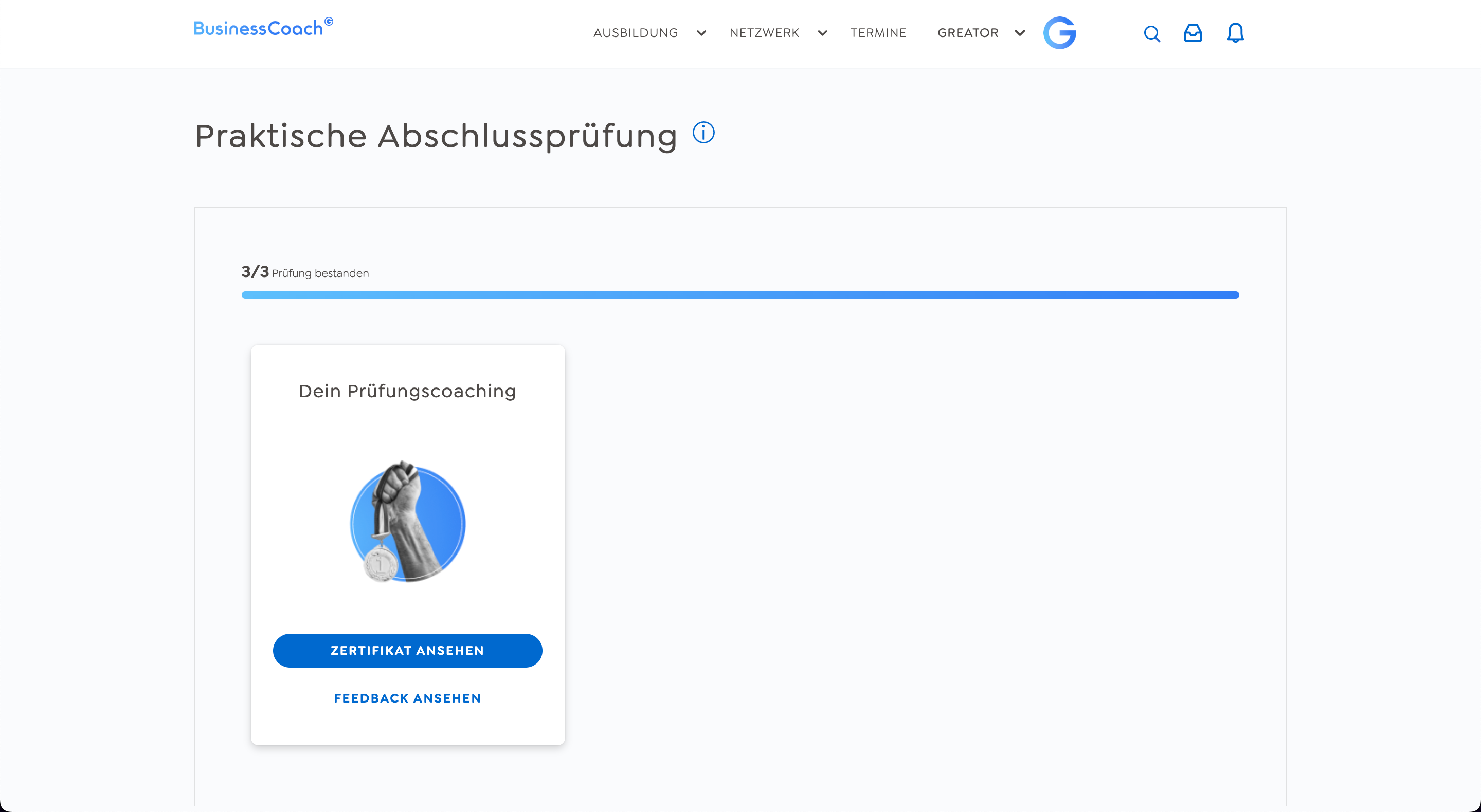

If they passed the exam, the participant can download their personalized certificate, which is automatically generated for them.

Screen after passed exam

Outcome

It’s hard to estimate if the quality of course alumni decreased significantly with this solution. But the exam didn’t change in its function to test the participant’s practical coaching skills. Hence, it’s a reasonable assumption that it didn’t affect coaching quality negatively.

The measurable impacts were on the scalability and cost side. The scheduling frustration was eliminated. No more cancellations, re-scheduling, and fully booked calendars. Participants could submit their exams, and examiners review them, whenever they wanted to. Furthermore, examiners needed half the time to review an exam and participants got their results faster.